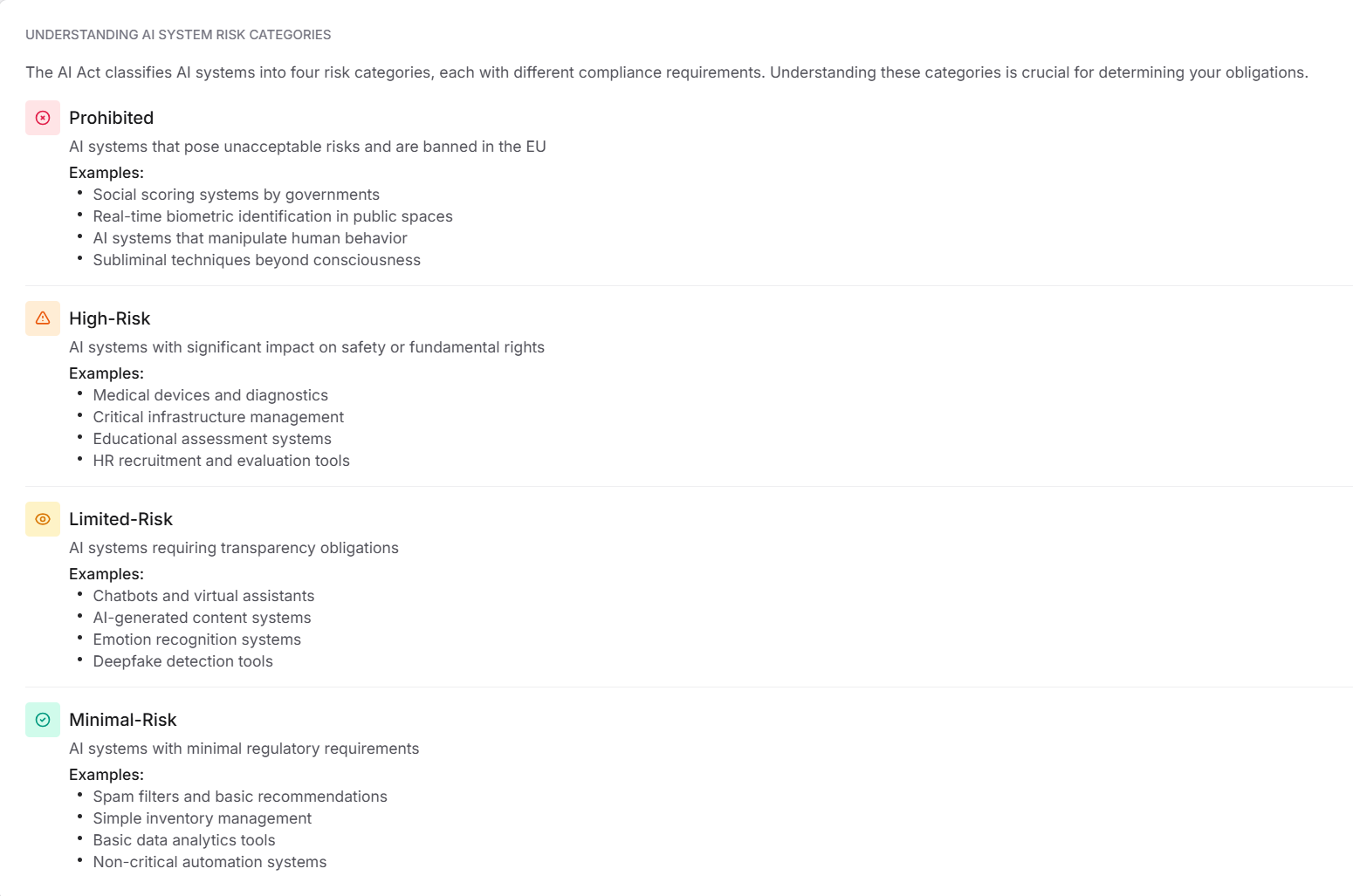

The AI Act classifies AI systems into four risk categories. Knowing these categories helps you understand the compliance obligations for each system.

Prohibited

AI systems that pose unacceptable risks and are banned in the EU.

Examples: social scoring by governments, real-time biometric ID in public spaces, behavior-manipulating AI, subliminal techniques.

High-Risk

AI systems with a significant impact on safety or fundamental rights.

Examples: medical devices, critical infrastructure management, educational assessments, HR recruitment tools.

Limited-Risk

AI systems that require transparency obligations.

Examples: chatbots, AI-generated content, emotion recognition, and deepfake detection.

Minimal-Risk

AI systems with minimal regulatory requirements.

Examples: spam filters, basic recommendations, simple inventory management, and non-critical automation tools.

Was this article helpful?

That’s Great!

Thank you for your feedback

Sorry! We couldn't be helpful

Thank you for your feedback

Feedback sent

We appreciate your effort and will try to fix the article